How to Build AI: A Practical DIY Guide

Learn how is build ai with a practical, DIY-focused workflow. From data prep to deployment, this guide covers steps, tools, safety, and real-world examples for hobbyists and homeowners exploring AI projects.

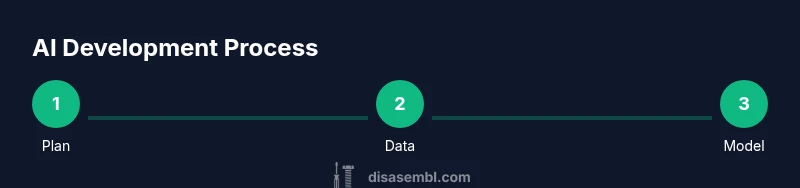

AI is built through a disciplined cycle: define a goal, gather and clean data, select an approach, train and validate, then deploy with monitoring and updates. This quick guide outlines the essential steps and common pitfalls so DIY enthusiasts can start small and scale responsibly. It emphasizes safety, ethics, and reproducibility.

What it means to build AI: framing for DIYers

Building AI means creating systems that can perceive, reason, or act on data to achieve a specific goal. For DIY enthusiasts, the journey isn't about inventing miracles; it's about composing reliable, modular components that work together. According to Disasembl, the best way to answer how is build ai is to view it as a repeatable cycle: define the objective, gather and clean data, select an approach, train and test, then deploy with ongoing monitoring. This mindset keeps projects manageable and makes progress narrable to teammates, family, or a client. Start by articulating a concrete task (e.g., classify product reviews as positive or negative) and a measurable success criterion (accuracy on a held-out set). From there, map the data needs, the modeling approach, and the evaluation plan. The Disasembl team emphasizes starting small, documenting all decisions, and building a modular pipeline you can reuse later. In practical terms, you’ll separate data ingestion, feature extraction, model training, and evaluation into distinct stages so you can test each one independently. This separation reduces risk and helps you scale later without rewriting everything. How is build ai becomes a tangible, iterative process when you keep the scope tight and the feedback loops short.

Data as the foundation of AI systems

Without data, you cannot train an AI. Data defines what your system can see, learn, and generalize to. Data quality starts with clear requirements, then gathering raw data from sources you’re licensed to use. Cleaning and preprocessing are not optional chores; they determine model quality as much as algorithm choice. Labeling or annotating data creates ground truth your model will chase during training. When you’re a DIY practitioner, consider synthetic data or rule-based labeling to bootstrap a small project. Keep data provenance—document where each sample came from, how it was collected, and any transformations applied. You should also implement basic data hygiene: deduplication, handling missing values, and sanity checks. Finally, think about data governance: who owns the data, who can access it, and how you will handle deletion or correction requests. Disasembl emphasizes starting with a minimized dataset to validate your pipeline before scaling up—reducing time and cost while building intuition for data quality. The goal is to maintain signal over noise and avoid biased or leaky data that could derail evaluation.

Model types and selection criteria

AI models are a family of tools rather than a single magic bullet. Your choice depends on the task, data size, compute budget, and reliability needs. For many DIY tasks, simpler models with clear behavior (like linear models or tree-based methods) can outperform flashy architectures on small datasets. If your goal involves understanding context, relationships, or language, transformer-style architectures offer strong performance but require careful data handling and more compute. Transfer learning—starting from a pre-trained base and adapting it to your task—can dramatically reduce training time and data needs. When selecting, define success criteria (speed, accuracy, recall, precision) and test several approaches against a held-out validation set. Also consider interpretability: some tasks demand models you can explain to a non-technical audience. Finally, document the rationale for your choice so future you (or a collaborator) isn’t left guessing what you did and why. Disasembl recommends starting with a baseline you can beat and measuring improvement against it.

Training, validation, and iteration loop

Training AI is an iterative process governed by data, algorithms, and evaluation metrics. Begin with a simple baseline and gradually add complexity. Use train/validation splits to monitor generalization and avoid overfitting; if you have enough data, a held-out test set seals the final estimate of performance. Choose an objective function aligned with your goal (e.g., cross-entropy for classification, MSE for regression) and tune hyperparameters with a disciplined approach rather than guesswork. Regularization techniques, such as early stopping or weight decay, help keep models from memorizing training data. Track metrics carefully and visualize learning curves to spot when the model learns or plateaus. In practice, run several small experiments in parallel and compare results on the same validation set to avoid bias. Finally, maintain reproducibility by fixing random seeds, recording library versions, and saving model checkpoints. A pragmatic DIY project benefits from incremental wins rather than a single, large leap—this approach also aligns with how Disasembl guides hobbyists through data-centric development.

Deployment considerations and monitoring

Deploying AI means turning a model into an accessible service with reliable performance. Start by packaging your model in a reproducible environment (e.g., container or virtual environment) and setting up a lightweight inference pipeline. Consider latency, resource usage, and scalability; for toy projects you can host locally, but plan for cloud options if you expect growth. Implement basic monitoring: track input distributions, prediction latency, error rates, and model drift over time. Establish alerting for anomalies and implement a simple rollback plan if performance degrades. Data provenance continues after deployment: log input sources and transformations to aid debugging and audits. Security matters too: protect endpoints, sanitize inputs, and guard against data leakage. Finally, plan for updates: retrain on fresh data, roll out gradually, and document version changes. Disasembl’s practical approach stresses small, testable deployments that you can replicate across projects to build momentum and confidence.

Ethical, legal, and safety considerations

Building AI with real impact requires attention to ethics and compliance. Respect user privacy and comply with data protection requirements; obtain consent where needed and minimize the collection of sensitive information. Be mindful of bias in data and algorithms; test for disparate impact and biases that could harm underrepresented groups. Ensure transparency by communicating limitations and uncertainties to stakeholders. Consider safety: implement input validation, monitor outputs for harmful content, and provide clear failure modes. Intellectual property matters too: respect licenses when using pre-trained components or data. Finally, plan for accountability: assign responsibility for model performance and establish processes for reporting issues. The Disasembl team emphasizes a cautious, principled approach so DIY builders can avoid costly missteps while learning.

A hands-on, small-scale example project

To anchor the concepts, we’ll outline a small project: sentiment classification for a product-review dataset. Define objective: categorize reviews as positive or negative; success metric: accuracy above 85% on a held-out set. Gather a compact dataset (labels included) or generate synthetic data to bootstrap the workflow. Preprocess: normalize text, remove noise, and tokenize. Model idea: start with a simple logistic regression or a small neural network using bag-of-words features; compare with a lightweight transformer approach if resources permit. Train, validate, and iterate on hyperparameters. Evaluate with confusion matrices and ROC-AUC if applicable. Finally, deploy as a microservice with a minimal API and monitor performance on new data. Keeping the project small allows you to learn the pipeline end-to-end and reuse the components in future experiments. This exercise also demonstrates why data quality and clear objectives matter more than complex architectures.

Scaling up: when and how to seek help

Once you’ve built confidence with a small project, you can scale by adding data, refining pipelines, and improving automation. Break the expansion into repeatable stages: data governance, model updates, monitoring, and governance policies. If you encounter compute constraints, explore more efficient architectures, quantization, or distillation strategies to reduce resource needs. Collaboration helps: seek feedback from peers, online communities, or local maker groups.

Tools & Materials

- Computer with Python installed(64-bit OS; at least 8GB RAM; IDE like VS Code)

- Python 3.x(Include pip and venv support)

- Data storage and backups(SSD preferred; regular backups)

- Jupyter/Notebook environment(For experiments and visualization)

- Data labeling tools (optional)(For manual annotation tasks)

- Basic libraries(numpy, pandas, scikit-learn, transformers (optional))

- Access to datasets (licensed or synthetic)(Ensure permissions and privacy considerations)

- GPU hardware (optional)(Helpful for larger models; not required for small projects)

Steps

Estimated time: 2-3 weeks

- 1

Define objective and success metrics

State the problem clearly and decide how you will measure success. Write down the target metric, data sources, and constraints so every future step aligns with the goal.

Tip: Create a one-page spec before you touch code. - 2

Collect and prepare data

Assemble a small, representative dataset. Clean data to remove noise, handle missing values, and standardize formats. Label a subset if required for supervision.

Tip: Document data sources and preprocessing steps for reproducibility. - 3

Choose a model approach

Pick a baseline model suitable for your data size and task. Consider transfer learning if your data is limited. Keep interpretability in mind.

Tip: Start with a simple baseline to establish a performance floor. - 4

Split data for training and validation

Create training, validation, and optional test sets. Ensure splits are stratified if dealing with categories and that data leakage is avoided.

Tip: Use the same random seed for all experiments to ensure fair comparisons. - 5

Train and tune

Run baseline training and gradually adjust hyperparameters. Record results and compare against the baseline on the validation set.

Tip: Change one parameter at a time to understand its impact. - 6

Evaluate and iterate

Assess performance with appropriate metrics and error analysis. Identify failure modes and implement targeted improvements.

Tip: Visualize learning curves to spot overfitting early. - 7

Deploy with monitoring

Package the model, set up a lightweight API, and implement basic monitoring for latency and accuracy. Plan for data drift handling.

Tip: Start with a small deployment and scale gradually. - 8

Maintain and update

Regularly retrain on fresh data, log predictions, and update documentation. Establish a rollback plan if performance degrades.

Tip: Automate parts of the pipeline to reduce manual work.

Got Questions?

What does it mean to build AI in practical terms?

Building AI means combining data, models, and processes to accomplish a defined task. It involves data collection, model selection, training, evaluation, and deployment with monitoring. It’s an iterative, disciplined workflow rather than a single magic solution.

Building AI means combining data, models, and processes to complete a defined task, in an iterative and disciplined way.

Do I need huge data to build AI?

Not always. Start with a small, well-labeled dataset and use simple models or transfer learning to achieve reasonable results. Synthetic data can bootstrap small projects, but always validate with real-world samples when possible.

You don’t always need huge data; start small and validate with real samples when you can.

What hardware do I need for a beginner project?

A modern computer with sufficient RAM and a stable internet connection is enough for many starter tasks. A GPU helps for larger models, but it isn’t strictly required for small classification or regression tasks.

A good modern computer suffices for many beginners; GPU helps for bigger models but isn’t required.

How long does it take to train a model?

Training time varies with data size and model complexity. Simple tasks can complete within minutes to hours, while larger projects may take days and require more compute planning.

Training time depends on data and model; small tasks can finish quickly, larger ones take longer.

How do you address bias and privacy in DIY AI?

Assess data for representativeness, test for disparate impacts, and minimize data collection. Use clear privacy practices and document limitations so users understand the system’s boundaries.

Check for bias, protect privacy, and be transparent about what the AI can and cannot do.

Watch Video

What to Remember

- Define a concrete objective and success metric.

- Build a modular data pipeline with clear provenance.

- Start simple; iterate with measurable experiments.

- Deploy gradually and monitor performance over time.