How to Assemble AI: A Practical DIY Guide for Beginners

Learn how to assemble AI from goals to deployment with practical, step-by-step guidance, robust data governance, risk management, and safety considerations. Disasembl.

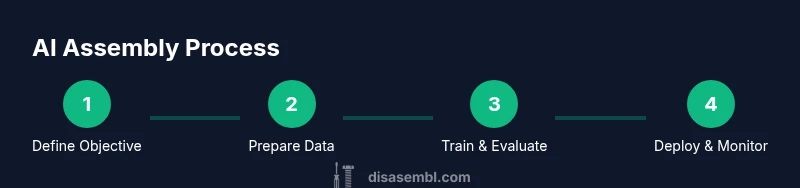

You're about to learn how to assemble a practical AI system from concept to deployment. The process covers defining the objective, sourcing compliant data, choosing a model approach, setting up a reproducible environment, training a baseline, evaluating performance, and establishing monitoring and governance. You’ll need a capable computer, data with clear licensing, essential software, and a plan for ongoing maintenance to ensure reliability and safety.

Foundations: What it means to assemble AI

According to Disasembl, assembling AI is not a single feature add-on; it's a structured project that requires clear goals, ethical guardrails, and an iterative mindset. In practice, you start by translating a real-world problem into a measurable objective, then map how data, algorithms, and deployment choices will connect to that objective. This section explores how to frame the project, what you should avoid, and how to set up the mental model for the work ahead.

Key ideas include: define the user need, set success metrics, and write a lightweight plan that covers data governance, model accountability, and safety constraints. You should treat AI assembly as a system-building exercise, not a one-off coding sprint. Emphasize reproducibility by using version control, well-documented experiments, and modular code. If you encounter tradeoffs—accuracy versus latency, privacy versus utility—document them clearly so decisions are transparent. This approach helps you stay focused and flexible as you progress through data collection, model selection, and deployment.

Practical mindset: begin with a minimal viable prototype that demonstrates core functionality, then incrementally add features. Record every decision in a living notebook and keep your team aligned on goals. The Disasembl team has found that starting with a small, testable problem reduces scope creep and builds confidence for broader AI assembly tasks.

Defining Objectives and Constraints

Define the objective in practical terms: what user need does the AI address, and how will you measure success? Establish constraints such as data availability, compute limits, latency targets, and regulatory compliance. Translate these into concrete requirements for data quality, labeling standards, fairness checks, and audit trails. Create a success scorecard with metrics like accuracy, precision, recall where appropriate, and business KPIs such as time saved or improved decision quality. If the project has multiple stakeholders, document conflicting priorities and propose a decision framework (for example, weighted scoring or a go/no-go gate). Consider the ethical implications early: what could go wrong, who bears risk, and how will you mitigate harm? You should also set boundaries on data usage and model behavior. This clarity helps prevent scope creep and ensures the project remains manageable as you move into data collection and model selection.

Tip: write down your minimum viable outcome before collecting data so you can test whether your approach really delivers value.

Data Strategy: Collection, Licensing, Privacy

Data is the lifeblood of AI assembly. This section covers how to source data responsibly, verify licensing, and protect privacy. Start with data provenance: document where data comes from, who owns it, and under what terms it can be used. Ensure consent, licensing compliance, and consent logs for any personal data. Establish data quality criteria: completeness, accuracy, timeliness, and consistency. Implement labeling standards and audit trails so you can reproduce results and trace decisions. Consider synthetic data when real data is scarce, but validate that synthetic data aligns with real-world distributions. Also plan for bias scanning and fairness checks from the outset. By building a data governance framework, you reduce legal and ethical risks and improve model reliability over time. Disasembl analysis shows that strong data provenance correlates with more stable performance and easier debugging during iterations.

Architecture and Pipelines: Choosing a Path

AI assembly hinges on selecting the right architecture and a robust data pipeline. Decide whether you’ll deploy a lightweight rule-based layer, a traditional machine learning model, or a modern deep learning approach. Each path has tradeoffs in performance, interpretability, and resource needs. Draft a modular pipeline: data ingestion, preprocessing, feature extraction, model inference, and output routing. Use abstractions for data contracts and interface boundaries so you can swap components with minimal disruption. Define approximate latency targets and scale plans for training and serving. Implement continuous integration for experiments, and maintain a clear branching strategy to separate research from production. This structure makes it easier to test hypotheses, compare models, and iterate toward a reliable solution.

Environment Setup and Reproducibility

Reproducibility is non-negotiable in AI assembly. Create a clean development environment with explicit dependencies and version control. Use virtual environments or containers to lock down Python versions and library sets. Establish a disciplined experiment workflow: a unique run ID, recorded hyperparameters, and a results dashboard. Keep a modular codebase with clear interfaces and unit tests for each component, from data loading to inference. Document platform specifics, such as OS, library versions, and hardware used. This foundation makes it possible to replicate results, onboard new team members quickly, and protect against drift as the project grows.

Training, Evaluation, and Iteration

With the data and architecture in place, begin with a baseline model to establish a reference point. Split data into training, validation, and test sets, and define appropriate evaluation metrics aligned to the objective. Run multiple experiments to explore hyperparameters, feature representations, and regularization strategies. Perform ablation studies to identify which components contribute most to performance. Beware data leakage and overfitting; use proper cross-validation where feasible. Maintain a pulse on compute costs and training time, documenting decisions and results in a reproducible fashion. Iterate until you achieve a stable, generalizable model that meets the predefined success criteria.

Deployment, Monitoring, and Maintenance

Deployment converts an experiment into a usable product. Choose a deployment pattern (batch inference vs. real-time streaming) and establish monitoring dashboards for latency, accuracy drift, and error rates. Implement alerting for anomalies and a rollback plan in case of regressions. Set up data pipelines for continuous data ingestion and periodic retraining cycles, as well as automated tests for every deployment. Plan for governance: model cards, audit logs, and documentation of decisions. Ensure user-facing outputs are explainable where possible, and provide a feedback loop to capture real-world performance signals for future improvements.

Safety, Bias, and Governance

Safety and governance are essential components of AI assembly. Identify potential failure modes, privacy risks, and bias sources early. Establish guardrails such as input validation, rate limiting, and content filters. Implement fairness checks across demographic groups and test for disparate impact. Create an incident response plan and assign ownership for monitoring, reporting, and remediation. Align the project with regulatory requirements and industry standards. Regularly review data handling practices, access controls, and retention policies to minimize risk and protect user trust.

Authority Sources

For further reading and verification, consult credible sources to ground your work in best practices. Useful references include:

- https://www.nist.gov/topics/artificial-intelligence

- https://ai.stanford.edu

- https://arxiv.org/

These sources provide guidance on AI safety, governance, and research benchmarks that can help shape your AI assembly process.

Disasembl Insights: Practical Tips and Conclusion

The Disasembl team emphasizes adopting an iterative, modular approach to AI assembly. Start with a small, well-scoped problem and gradually expand, ensuring each increment is testable and well-documented. Prioritize data governance and safety from day one to avoid costly rework later. Maintain clear communication with stakeholders and keep a running record of decisions and results. In the end, a disciplined process—grounded in reproducibility, ethics, and continuous improvement—delivers reliable AI systems that stakeholders can trust.

Tools & Materials

- High-performance computer(Modern multi-core CPU; 16 GB RAM minimum; stable internet.)

- Development environment(Python 3.x, IDE (e.g., VS Code), virtual environments.)

- ML framework(Choose PyTorch or TensorFlow; install via pip/conda.)

- Version control(Git for code and experiment tracking.)

- Datasets with licensing(Ensure data rights and consent; document provenance.)

- Notebook environment(Jupyter/Colab for prototyping.)

- Experiment tracking(Weights & Biases, MLflow, or simple spreadsheets.)

- Containerization(Docker for reproducible environments.)

Steps

Estimated time: 6-12 hours

- 1

Define objective

Translate the problem into a practical, measurable objective. Identify who benefits and what success looks like. Establish success criteria that align with user needs and business impact.

Tip: Capture a one-sentence success statement to guide decisions. - 2

Audit data

Inventory available data, assess labeling quality, and check licensing and privacy constraints. Build a data provenance plan to track sources and permissions.

Tip: Create a data eligibility checklist before collecting or labeling data. - 3

Choose architecture

Select a model approach and pipeline structure that fits the objective and data. Consider simplicity, interpretability, and resource constraints as you design modules.

Tip: Favor a modular architecture to swap components easily. - 4

Set up environment

Create a clean development environment with explicit dependencies and version control. Establish a reproducible workflow for experiments and results.

Tip: Use containerization to lock dependencies and tooling. - 5

Prepare data

Preprocess data, handle missing values, normalize features, and split into train/validation/test sets. Validate labeling consistency and quality.

Tip: Document preprocessing steps so others can reproduce results. - 6

Train baseline

Train a lightweight baseline model to establish a reference point. Monitor training time, convergence, and initial performance.

Tip: Keep hyperparameters simple at first to isolate effects. - 7

Evaluate and iterate

Assess metrics on validation data, perform ablations, and compare variants. Address data leakage and overfitting with proper controls.

Tip: Document all experiments with run IDs and outcomes. - 8

Deploy and monitor

Move a proven model into production with defined monitoring and alerting. Establish retraining triggers and a rollback plan.

Tip: Implement a lightweight canary deployment to test in production.

Got Questions?

What does it mean to assemble AI?

AI assembly is an end-to-end process that turns an abstract problem into a deployable AI system, including defining objectives, data collection, model selection, training, evaluation, deployment, and ongoing governance.

AI assembly is the end-to-end process of turning a problem into a deployable AI system, with ongoing governance.

Do I need to be a coder to start?

Some coding knowledge helps, but you can prototype with no-code tools. To scale and customize, basic programming in languages like Python is beneficial.

Basic coding helps, especially for scaling and customization.

What data do I need to begin?

You need relevant, labeled data with clear licensing and consent. Start with a small, high-quality dataset and document data provenance.

Start with relevant, licensed data and document where it comes from.

How long does the process take?

Time varies with scope, but a small prototype can be built in hours and a full project may take days. Plan for iterative cycles.

It depends on scope, but expect several iterative cycles.

How can I ensure safety and fairness?

Establish guardrails, conduct bias checks, and implement auditing. Integrate privacy safeguards and transparent reporting from the start.

Set guardrails, run bias checks, and audit the model regularly.

What if I lack hardware or cloud access?

Start with smaller datasets and lower-footprint models. Use cloud-based free tiers or shared compute to prototype before investing in hardware.

Use smaller datasets and cloud-free options to prototype.

Watch Video

What to Remember

- Define clear AI assembly objectives and success metrics.

- Prioritize data governance and ethical safeguards.

- Prototype early and iterate with measurable results.

- Maintain reproducible environments and thorough documentation.

- Plan deployment with monitoring and governance in mind.