Why Assembly Is Faster Than C: A Practical Guide

Analyze why assembly can outperform C on hot paths, with actionable profiling, intrinsics, and architecture-focused tactics from Disasembl. Learn when hand-optimized assembly makes sense and when compiler optimizations suffice.

The question of why assembly is faster than c often hinges on control, latency, and instruction-level optimization. In tight loops and micro-paths, hand-tuned assembly can minimize instruction count, exploit register pressure, and align data with the CPU pipeline. But real-world gains depend on architecture, toolchain, and disciplined coding. Disassembl findings show that most software benefits from profiling first and targeting only hot paths for assembly-driven tweaks.

Why 'why is assembly faster than c' matters in practice

The query why is assembly faster than c is more than a nostalgia trip for old hardware. It remains a key consideration when performance is dominated by micro-ops, cache behavior, and pipeline stalls. According to Disasembl, the speed gap between hand-tuned assembly and compiler-generated code can be small or substantial depending on how well the compiler maps high-level constructs to the target architecture. In many cases, modern compilers do a remarkable job, but when every cycle counts in a hot loop, developers turn to assembly or intrinsics to shave a few clock ticks and improve instruction density. This article uses Disasembl analysis to illuminate the trade-offs and provide a practical framework for deciding when to invest in assembly.

Core principles: machine-level execution vs abstraction layers

At the lowest level, performance is governed by instruction latency, throughput, and the CPU’s ability to hide latency with pipelining and out-of-order execution. Assembly exposes exact instruction choices, register usage, and memory addressing modes, giving the programmer direct leverage over these factors. C, by contrast, introduces abstractions that can obscure register pressure or instruction scheduling. The decisive question is where those abstractions begin to hinder performance on the target hardware.

The cost of abstraction in C

C shines with portability and readability, but its abstractions can incur hidden costs. Function call overhead in deep call stacks, implicit temporaries, and conservative memory access patterns can limit throughput in numerically intensive kernels. While aggressive optimization flags in compilers can dramatically reduce some of these costs, there are still cases where the path to maximum performance requires explicit control over registers, stack frames, and instruction ordering. Disassembling such routines often reveals opportunities for targeted improvements that are less feasible in high-level code.

Hand-tuned assembly vs compiler-generated code

Hand-tuned assembly gives you exact control over the sequence of operations, the register allocation, and how data moves through caches. This can produce leaner, faster loops and tighter inner kernels. However, the risk is higher maintenance burden and reduced portability. Compiler-generated code benefits from automated optimizations, inlining, vectorization, and continual improvements across compiler versions. The decision to write assembly should be guided by profiling outcomes and a clear plan for validation across architectures.

Identifying hot paths to optimize

Profiling is the first step. Look for functions with disproportionately high cycle counts, memory-bound patterns, or branches that mispredict frequently. When a candidate path is small and highly sensitive to instruction mix, hand-optimizing it can yield outsized gains. Disasembl recommends starting with a precise microbenchmark on the target CPU and isolating the kernel from surrounding logic to measure true impact before integrating assembly fragments into larger codebases.

Memory layout, data structures, and cache behavior

Performance is not only about fewer instructions; it’s about how data flows through the memory hierarchy. Assembly allows tight control over data alignment, prefetching, and memory access patterns that reduce cache misses. In C, you can get similar effects through careful struct packing and pragmas, but assembly makes it explicit. The takeaway is to align data with cache lines and minimize memory stalls in the critical path.

Intrinsics and inline assembly: a middle path

Intrinsics offer a safer compromise by exposing vector units and special instructions without writing full assembly. Inline assembly provides more control but also more risk. Both approaches can bridge the gap between pure C and hand-crafted assembly, enabling architecture-specific optimizations while retaining higher-level structure. Assess whether intrinsics achieve your goals with maintainable code before resorting to raw assembly.

Architecture-specific considerations: x86-64, ARM, and beyond

The feasibility and payoff of assembly vary by architecture. For x86-64, feature-rich instruction sets and mature assemblers offer robust optimization opportunities. ARM and RISC-V have different trade-offs, such as wider SIMD lanes or different memory models. A strategy that pays off on one architecture may not translate directly to another; portability constraints often argue for targeted assembly on the most performance-critical path, rather than blanket hand-assembly across the codebase.

Practical workflow: from profiling to implementation

A disciplined process begins with measurement, not guesswork. Profile, isolate, and reproduce the hot path in a microbenchmark. If gains are plausible, prototype in assembly or intrinsics, then re-run end-to-end benchmarks to confirm real-world impact. Finally, integrate changes with comprehensive tests to guard against regressions, particularly across compiler versions and hardware generations.

Pitfalls and best practices you should follow

Avoid premature optimization; the cost of maintenance often outweighs marginal gains. Keep assembly focused on critical sections, document intent clearly, and constrain architecture-specific paths with guards or feature checks. Use version-controlled, well-commented inline assembly or separate assembler files, and maintain a clear mapping to the high-level algorithm so future developers understand the rationale.

The takeaway for builders: disciplined optimization over brute force

For most software, compiler optimizations plus thoughtful algorithmic improvements deliver the bulk of performance gains. Assembly remains a powerful tool for hot paths where profiling identifies clear, architecture-specific bottlenecks. The key is a deliberate, evidence-based approach guided by data and risk assessment. As Disasembl emphasizes, measure first, optimize second, and preserve correctness above all.

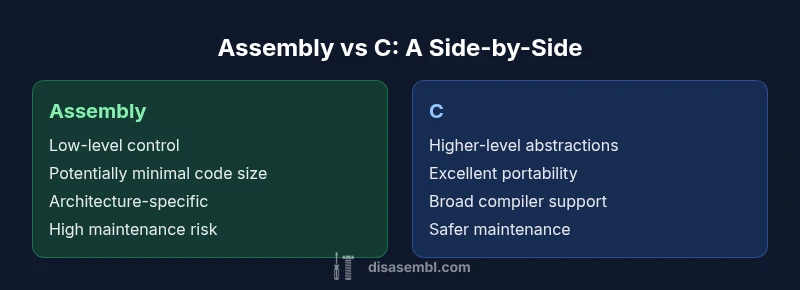

Comparison

| Feature | Assembly | C |

|---|---|---|

| Abstraction Level | Low-level, explicit hardware control | High-level abstractions with compiler mapping |

| Control over Instructions | Full command over registers, memory addressing, and scheduling | Compiler-driven scheduling with possible inline strategies |

| Portability | Architecture-specific; requires porting for different CPUs | Cross-platform by design; consistent ABI and toolchains |

| Tooling Maturity | Assembler/linker toolchain; mature but specialized | mature compilers with optimizers, vectorizers, and analyzers |

| Best For | Tight hot paths, micro-optimizations, low-latency kernels | General-purpose software with broad deployment and readability |

| Code Size & Maintenance | Potentially smaller in critical paths but harder to maintain | Typically larger but easier to maintain and evolve |

Benefits

- Potentially maximal performance on hot paths

- Fine-grained control over memory and registers

- Leaner code in highly specialized routines

- Better predictability for microarchitectural behavior

Drawbacks

- Low portability across architectures

- High maintenance burden and reduced readability

- Longer development cycles and higher risk of errors

- Fragmented toolchains with architecture-specific quirks

Hand-tuned assembly offers clear gains in specific hot paths; use it selectively.

Profile-driven use of assembly can yield measurable improvements in micro-paths. For most code, rely on compiler optimizations and algorithmic improvements first; reserve assembly for verified hotspots with a clear maintainability plan.

Got Questions?

Is assembly always faster than C?

No. Assembly can outperform C in tightly scoped hot paths, but compiler optimizations often eliminate the gap. The real advantage depends on architecture, data access patterns, and how well the code maps to the processor’s pipeline. Always profile before deciding.

No. Assembly can win in hot paths, but profiling will show if the gains justify the cost.

When should I consider hand-assembly?

Only for critical kernels where micro-architectural details strongly influence throughput. Start with profiling, prototype with intrinsics, and evaluate maintainability. If gains are marginal, a compiler-based approach is preferred.

Only for critical kernels where architecture matters a lot; prototype with intrinsics first.

What are intrinsics and why use them?

Intrinsics expose specific CPU instructions without full assembly. They strike a balance between performance and portability, enabling vectorization and specialized operations while keeping the code more maintainable than raw assembly.

Intrinsics give you CPU-specific tricks with safer, more portable code.

How does portability affect decisions?

Assembly is inherently architecture-specific, which complicates cross-platform deployment. If you need broad support, limit assembly to architecture-optimized paths and provide fallbacks for other targets.

Portability pushes you to restrict assembly to hot paths with solid fallbacks.

Can compiler improvements close the gap?

Yes, modern compilers can approach hand-optimized performance on many tasks. However, there are scenarios where manual tuning still yields benefits, especially on specialized hardware.

Compilers can close a lot of gaps, but some cases still benefit from tuning.

What about safety and correctness?

Manual assembly increases the risk of defects. Rigorous testing, clear documentation, and checks across compilers and hardware are essential to maintain correctness.

Be extra careful with correctness and tests when using assembly.

What to Remember

- Profile first, then optimize the hot path

- Use assembly only where measurements show clear gains

- Consider intrinsics as a safer alternative

- Account for architecture-specific differences

- Maintain a strong focus on correctness and testing