How to Find Assemble Code Rivals: A Step-by-Step Disassembly Guide

Learn how to locate assemble code rivals with a practical, ethical disassembly workflow. Step-by-step methods, sources, and tools to compare opcode patterns and map findings to hardware.

By the end of this guide, you will learn how to find assemble code rivals by analyzing publicly available assembly snippets, comparing opcode patterns, and mapping findings to devices. Key requirements are basic assembly knowledge, access to online code repositories, and safe, ethical research practices. According to Disasembl, following a structured, step-by-step approach yields reliable, repeatable results.

How to find assemble code rivals: an in-depth overview

The phrase how to find assemble code rivals encapsulates a practical goal for DIY researchers and professionals: to identify and understand competing or similar code patterns across devices and platforms. This guide frames rival code as a set of observable patterns in assembly language that recur across multiple samples, not as a value judgement. By examining opcode sequences, control flow, and data handling, you can deduce why certain implementations differ and how attackers or defenders might approach similar problems. The Disasembl approach emphasizes reproducibility, proper sourcing, and clear documentation. Throughout this section, you’ll see how to transform raw snippets into actionable insights while keeping your analysis transparent and auditable.

Key ideas include: defining a reproducible workflow, recognizing common instruction sequences, and correlating patterns with architecture features. If you’re wondering how to find assemble code rivals effectively, start by outlining your hypotheses, then test them against diverse samples. This disciplined mindset helps prevent cherry-picking results and strengthens your conclusions for the rest of the guide. Finally, remember that the goal is learning and improvement, not blame or competition.

Disasembl’s framework supports beginners and advanced researchers alike by prioritizing clarity, safety, and replicable steps. By following the steps in this article, you’ll build a robust foundation for understanding rivals’ assembly strategies and refining your own disassembly workflows.

Ethical ground rules for rival-code analysis

When exploring assemble code rivals, ethics and legality are non-negotiable. Obtain explicit permission when analyzing code tied to proprietary hardware or software, and avoid distributing sensitive samples. Maintain a transparent audit trail: record sources, versions, and the exact steps you took to replicate results. If you or your organization shares findings, redact sensitive identifiers or use synthetic examples for demonstrations. According to Disasembl, ethical practices improve trust and enable safer, more productive learning experiences for everyone involved.

In addition, always respect licenses and terms of use for any code you study. Public samples can be useful for education, but distribution of restricted or compiled binaries may violate laws or contractual obligations. Keep personal and corporate data separate from your analysis, especially when testing on real devices. Finally, consider adding a safety review to your process to catch potential risks before you publish or apply your findings.

Gathering sources: where to look for assemble code patterns

A reliable analysis begins with high-quality sources. Start with publicly available repositories that host disassemblies, such as educational projects, open-source firmware samples, and university course materials. Save references with precise URLs and version tags to ensure reproducibility. You can also collect documentation on target architectures and compilers to provide context for opcode choices. When you encounter a sample, note the compiler version and optimization level, as these factors strongly influence instruction selection. Disassemblers can vary in how they render code, so cross-check samples with multiple tools to confirm patterns and reduce misinterpretation.

To organize your data, create a structured catalog: sample ID, device/hardware alias, ISA/architecture, compiler, optimization flag, and a short description of the observed patterns. This structured approach helps you later compare across samples, rather than relying on memory or intuition. In practice, you’ll benefit from starting with broad searches, then narrowing to specific families where patterns emerge. Finally, maintain a changelog as you add new samples to track what you’ve learned and how interpretations evolve over time.

Core analysis: comparing opcodes and instruction streams

The heart of discovering rivals’ assembly code lies in comparing opcode sequences and control-flow graphs. Begin by normalizing samples: map equivalent instructions across assemblers (for example, moves vs. loads), align function boundaries, and remove non-code sections like data tables when focusing on behavior. Look for recurring motifs: prologues/epilogues, common switch-case structures, and frequently used call conventions. Create a matrix of observed opcodes and their frequencies across samples, then cluster samples by similarity. This helps reveal whether different devices or compilers converge on similar strategies, which is often the signal that rivals target analogous use cases or hardware features.

When patterns diverge, investigate the cause: different ISA extensions, alternative instruction encodings, or varying optimization levels. Document why a pattern exists and how it affects performance or security implications. A disciplined comparison reduces bias and yields a more robust understanding of rival code dynamics. As noted by Disasembl, reproducible comparisons and clear justification are crucial for credible results.

Cross-platform validation: architecture and compiler effects

Rivals’ code often reflects the constraints of the underlying architecture and the chosen compiler pipeline. Validate your findings by testing across multiple platforms and toolchains. For example, compare how the same high-level logic is realized on different ISAs (x86, ARM, MIPS) and with different compiler versions or optimization levels. This helps distinguish genuine strategic choices from incidental differences. Keep a careful log of the environment for each sample: CPU, OS, compiler, and version numbers. If possible, replicate samples in a controlled lab or sandbox to confirm results. When results are inconsistent, re-check source samples and tool configurations to rule out preprocessing artifacts or disassembly inaccuracies.

Disassemblers and debuggers have varying interpretations of instruction boundaries and addressing modes. By using multiple tools and cross-validating, you improve confidence in your conclusions and minimize misinterpretation. Ethical caveats remain important here: do not reveal sensitive details or techniques that could facilitate wrongdoing, and always adhere to applicable laws and licenses.

From analysis to practical guidance: turning findings into actionable insights

The ultimate goal is to convert analysis into practical disassembly guidance you can rely on for your own projects. Translate observed patterns into checklist-style notes: recurring instructions to watch for, typical prologue/epilogue conventions, and commonly used idioms that reveal compiler preferences. Use these notes to inform your own disassembly or reverse-engineering tasks, and, where appropriate, to build safer, more robust software designs (for example, by understanding how rivals exploit or bypass certain patterns). Maintain a living document that links each insight to its source sample and context, so future researchers can reproduce your conclusions. As the Disasembl team emphasizes, clear, modular documentation accelerates learning and reduces the risk of misinterpretation.

Maintaining a scalable, repeatable workflow

Finally, ensure your process scales with more samples and broader goals. Automate data collection where possible: scripts to fetch samples, standardize metadata, and generate initial opcode histograms. Create templates for analysis reports to maintain consistency as you grow the dataset. Schedule periodic reviews to revalidate conclusions against new samples and evolving architectures. By treating this as an ongoing practice rather than a one-off activity, you’ll stay ahead of evolving rivals and sharpen your own disassembly skill set.

Tools & Materials

- Computer with internet access(Any modern OS; Linux, macOS, or Windows)

- Access to code repositories(GitHub, GitLab, or internal repositories)

- Disassembler tool(e.g., objdump, radare2, IDA-like tools)

- Text editor(For notes, scripts, and documentation)

- Notebook or digital notes(To track hypotheses and results)

- Virtual lab or sandbox(Isolated environment for safe testing)

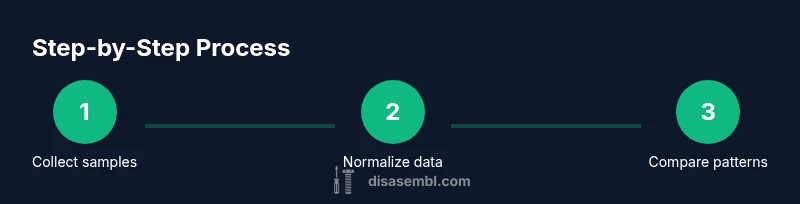

Steps

Estimated time: Estimated total time: 2-3 hours

- 1

Define your objective

State what you want to learn about rival code and which devices or architectures you will analyze. Clarify the scope to avoid scope creep and ensure the data you collect is relevant.

Tip: Write a one-paragraph objective and keep it visible during the study. - 2

Gather diverse samples

Collect publicly available assembly samples from multiple sources, noting the target device, ISA, compiler, and optimization level. Aim for variety to avoid biased conclusions.

Tip: Record exact source URLs and version tags for reproducibility. - 3

Normalize samples for comparison

Map equivalent instructions across assemblers, align function boundaries, and strip non-code sections. This makes cross-sample comparisons meaningful.

Tip: Maintain a consistent normalization schema across all samples. - 4

Build opcode-pattern matrices

Create a frequency matrix of observed opcodes and sequences. Use clustering to identify groups of similar patterns across samples.

Tip: Use simple clustering first; refine with manual checks. - 5

Cross-validate across toolchains

Run the same samples through different disassemblers and compilers to confirm patterns are reliable and not an artifact of a single tool.

Tip: Document tool versions and any discrepancies. - 6

Translate findings into guidance

Convert patterns into practical notes: what to watch for, how rivals implement common tasks, and how to adapt your own disassembly approach.

Tip: Link each insight to a concrete sample for traceability. - 7

Iterate and expand

Regularly add new samples and update your notes. Schedule periodic reviews to keep your analysis current with evolving architectures.

Tip: Set a cadence, e.g., monthly updates.

Got Questions?

Is it legal to analyze rival code and share findings?

In many cases, analyzing publicly available samples for educational purposes is legal, but you must respect licenses and terms of use. Do not distribute restricted binaries or sensitive samples. When in doubt, seek legal guidance or permissions from rights holders.

Analyzing publicly available samples for learning can be legal, but never share restricted material. When unsure, consult the license terms or a legal advisor.

What counts as a rival in this context?

A rival in assembly code analysis refers to other samples or implementations that serve a similar function or target the same hardware. The focus is on behavior and patterns, not people or brands.

Rivals are other samples with similar purposes or targets. The emphasis is on patterns and techniques, not competition.

Which tools are best for opcode analysis?

Effective analysis uses a combination of disassemblers, debuggers, and static analysis scripts. Start with widely used open tools and progressively add specialized ones as needed.

Use a mix of free and paid disassemblers and script quick checks to speed up repetitive tasks.

How do you ensure ethical use of findings?

Maintain an ethical framework: do not harm devices, respect licenses, anonymize sensitive data, and publish only responsibly. Document sources and purpose clearly.

Follow an ethics-first approach: respect licenses, anonymize sensitive data, and publish responsibly.

Can open-source samples be used for this analysis?

Yes. Open-source samples are designed for learning and analysis. They provide a safe, legal ground for practicing pattern recognition and disassembly techniques.

Open-source samples are great for practice and learning; just respect their licenses.

How often should I update my analysis?

Set a regular cadence, such as monthly updates, to incorporate new samples, architectures, and compiler changes.

Update your analysis monthly to stay current with new samples and compiler shifts.

Watch Video

What to Remember

- Define a clear objective before collecting samples

- Normalize data to compare across tools and architectures

- Validate patterns with multiple toolchains

- Document sources and reasoning for repeatability

- Treat findings as an evolving process, not a one-off task